Working with LLMs is complicated. For simple setups, like general purpose chatbots (ChatGPT), or classification, you have few moving pieces. But when it’s time to get serious work done, you have to coax your model into doing a lot more. We’re working on Project Cyborg, a DevOps bot that can identify security flaws, identify cost-savings opportunities in your cloud deployments and help you to follow best practices. What we need is an AI Agent.

Why do we need an agent?

Let’s start at the base of modern AI: the Large Language Model (LLM).

LLMs work on prediction. Give an LLM a prompt, and it will try and predict what the right answer is (a completion). Everything we do with AI and text generation is powered by LLMs. GPT-3, GPT-3.5 and GPT-4 are all LLMs. The problem with this is that they are limited to working with initial training data. These models cannot access the outside world. They are a brain in a box.

You have a few different options depending on your use-case. You can use fine-tuning, where you undergo another training stage. Fine tuning is excellent, and has a lot of use cases (like classification). It still doesn’t let you use live data. You can also use embeddings. This lets you extend the context length (memory) of your AI to give so that it can process more data at once. Embeddings help a lot, but they don’t help the LLM take action in the outside world.

The other option is to use an AI agent.

What is an Agent?

Here’s the simplest definition:

An AI agent is powered by an LLM, and it uses tools (like Google Search, a calculator, or a vectorstore) to interact with the outside world.

That way, you can take advantage of the communication skills of an LLM, and also work on real-world problems. Without an agent, LLMs are limited to things like chatbots, classification and generative text. With agents, you can have a bot that can pull live information and make changes in the world. You’re giving your brain in a box a body.

How can we do this? Well, I’m going to be using Langchain, which comes with multiple agent implementations. These are based on ReAct, a system outlined in a paper by Princeton University professors. The details are complicated, but the implementation is fairly simple: you tell your AI model to respond in a certain style. You ask them to think things through step by step, and then take actions using tools. LLMs can’t use tools by default, so they’ll try and make up what the tools would do. That’s when you step in, and do the thing the AI was trying to fake. For example, if you give it access to Google, it will just pretend to make a Google Search. You set up the tools so that you can make an actual Google Search and then feed the results back into the LLM.

The results can seem magical.

Example: AI Agent with Google Search

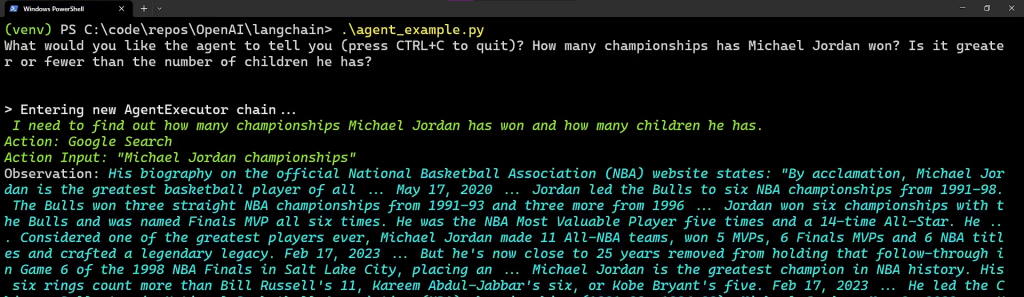

Let’s start with a simple agent that has access to two tools.

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.llms import OpenAI

# We'll use an OpenAI model (Davinci by default) as the "brain" of our agent

llm = OpenAI(temperature=0)

# We'll provide two tools to the agent to solve problems: Google, and a tool for handling math

tools = load_tools(["google-search", "llm-math"], llm=llm)

# This agent is based on the ReAct paper

agent = initialize_agent(tools, llm, agent="zero-shot-react-description", verbose=True)

while True:

prompt = input("What would you like the agent to tell you (press CTRL+C to quit)?")

agent(prompt)

These agent examples look the best in video form:

Example: AI Agent with Access to External Documents (Vectorstore)

Here’s another example that uses a tool to pull information about Azure. I converted the official Azure documentation into a Vectorstore (aka embeddings). This is being used by Project Cyborg so that our DevOps bot can understand best practices and the capabilities of Azure.

tools = [

Tool(

name = "Azure QA System",

func=chain,

description="useful for when you need to answer questions about Azure. Input should be a fully formed question.",

return_direct=False

)

]

Here’s it in action:

AI Agents make LLMs useful

Chatbots are cool. They are very useful for many things. They can’t do everything, though. Most of the time, your AI will need access to live info, and you’d like for it to be able to do things for you. Not just be a very smart brain that can talk. Agents can do that for you. We’re figuring out how we can use them here at Electric Pipelines. If you want help figuring out how agents could help your business, let us know! We’d be happy to talk.